One fish, two fish, what the heck are you, fish?

A transparent siphonophore hovers weightlessly on the monitors before us suspended in a silently shifting soup of marine snow and sapphire sea; a delicate, living Chihuly sculpture made of meticulously organized water and proteins.

“I can’t tell if that’s the head or the tail...”

“That’s okay, neither can the algorithm.”

“Is that the video game? ‘Is this a head? Or a butt?’”

Soft chuckles rumble through the cabin, muted by equipment, proximity, and masks. It’s late October 2021, and there’s a contagious joy onboard as field operations with our research and technology partner MBARI’s research vessels are underway again after their pandemic hiatus. I’ve been invited to be an enthusiastic fly on the wall while two of the Aquarium’s biologists accompany the MBARI crew for the day to search the sea for gelatinous animals to join our Into the Deep/En lo Profundo exhibit.

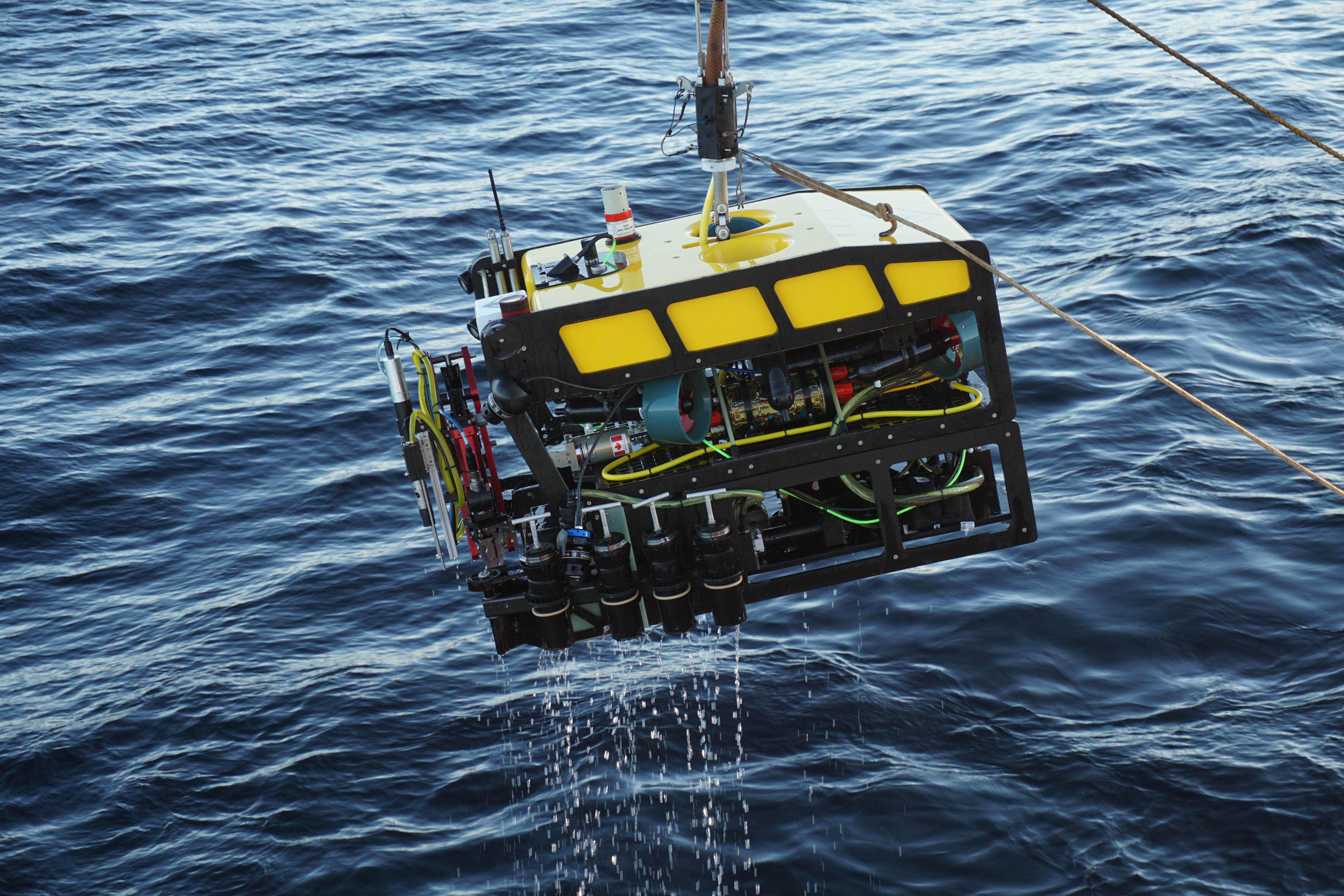

The cabin itself is actually the interior of a small cargo container resting on the deck of the research vessel Rachel Carson. Normally, the paired partner of the much larger remotely operated vehicle (ROV) Ventana, with a dedicated onboard control room, on this trip, the R/V Rachel Carson plays host to the aptly named MiniROV.

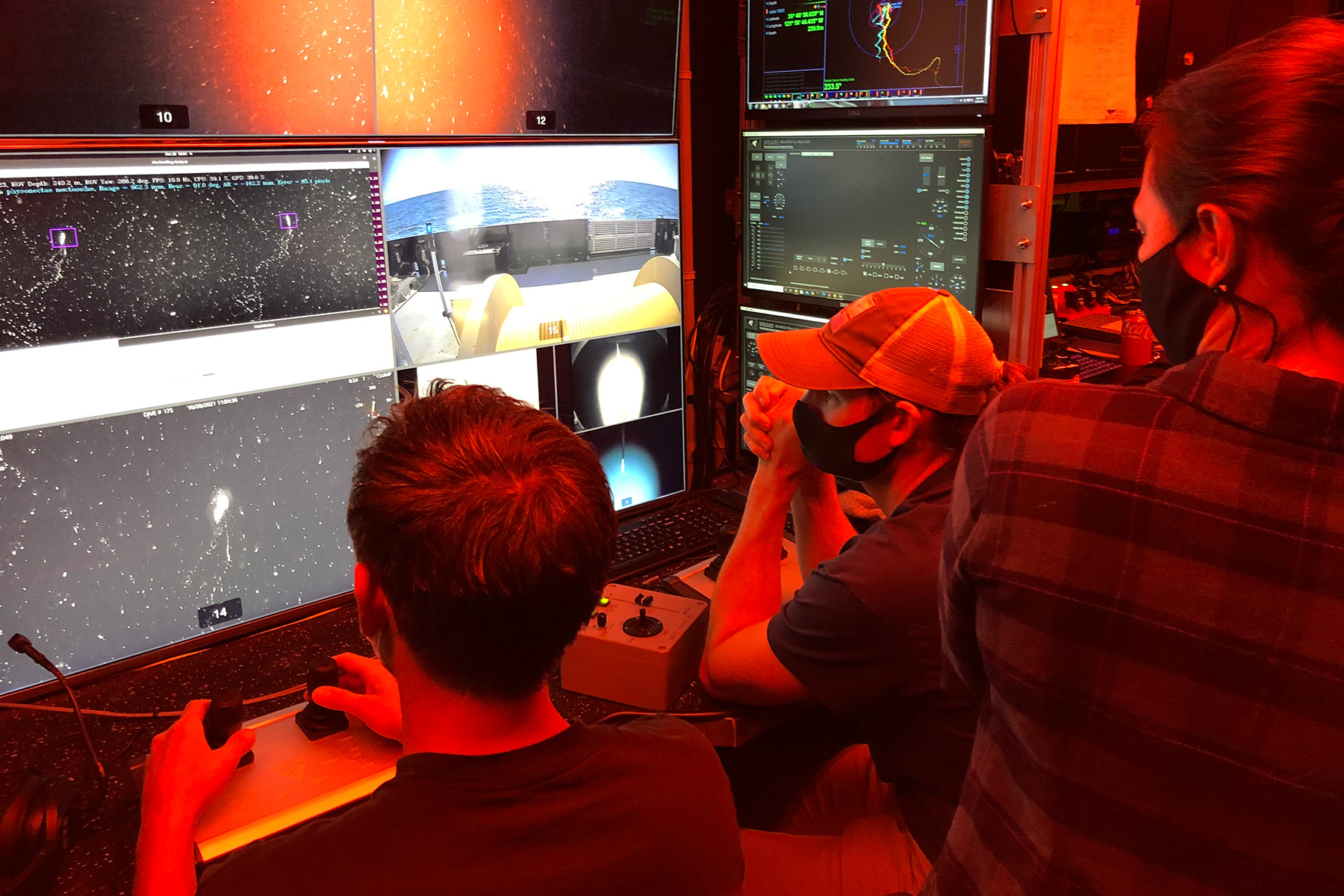

A third of the size of its older ROV siblings Ventana and Doc Ricketts, the MiniROV comes with its own portable control room built inside a shipping container, allowing it the freedom to be transported and deployed for expeditions around the world. Inside the metal hub, one wall is completely dedicated to dozens of computer monitors and towers, leaving just enough room for people to stand and maneuver their way around scattered bench stools and office chairs in the warm red light that fills the space, promising to keep our vision sharp to spot tiny treasures drifting in the dark.

Because of the small size of MBARI’s MiniRov, it can be transported and deployed around the world.

© Joost Daniels/MBARI

Inside the MiniROV’s control room, walls of monitors keep tabs on the various instrumentation and cameras on the robot and ship.

© 2024 Jo Simpson/Monterey Bay Aquarium

On one of the monitors, two hollow boxes appear—one cyan and one magenta—framing the fragile body of the siphonophore’s many colonial zooids; the boxes stutter, flickering in and out while a computer attempts to identify the animal within.

We spend another few minutes recording this delicate drifter, the MiniROV manipulating its way around its meters-long body—the largest organism we’ve spotted, and will spot, all day—expertly piloted by dexterous hands and what looks like a computer-game joystick from the 90s. Algorithm and scientists sated, we move on, continuing our expedition through the midwater of the Monterey Bay.

We’re out at sea for nine hours that day, leaving before sunrise and returning home as the sun begins to set in the late-autumn eve. From that one trip, hours of deep-sea footage have been captured, ready to be cataloged and dissected back on shore.

MBARI has filmed nearly 30,000 hours of deep-sea video, all combed through and cataloged by experts in their Video Lab who manually identify the animals, environments, and objects spotted on each dive. That team is responsible for tagging more than 10 million animals and other features of interest over the last 35 years, the largest library of annotated deep-sea video data anywhere in the world.

But on this trip, engineers and scientists were testing a new piece of technology to assist those experts—a machine learning algorithm trained to identify marine organisms in real time.

Spinning a new kind of sea spider web

For the last three years, MBARI Principal Engineer Kakani Katija has led a team creating an interconnected suite of software tools to make ocean research more accessible using artificial intelligence (AI). Collectively called Ocean Vision AI, the program aims to bring the collaborative power of programmers, marine scientists, and enthusiasts together to help us better understand our changing ocean.

Ocean Vision AI comes in three parts: FathomNet, a database of expertly labeled images and machine learning models that can be used to identify ocean animals; the Portal (launching summer 2024), an online, collaborative tool that uses AI to process ocean imagery; and FathomVerse, the final piece of the Ocean Vision AI puzzle, which aims to involve the public in the scientific process. While two-thirds of the Ocean Vision AI machine-learning efforts concentrate on the needs of ocean scientists, all three of the products rely on each other, with each system feeding into and being nourished by the others—its own little digital ecosystem.

Researchers in MBARI’s Video Lab have combed through thousands of hours of deep-sea footage to identify and label animals and objects.

© 2022 MBARI

Machine learning models must be trained to identify and classify animals like this giant larvacean. FathomVerse allows players to interact with real underwater images and work alongside researchers to improve the artificial intelligence that helps researchers study ocean life.

© 2002 MBARI

It takes a digital village

The secret to a good machine learning model is just that—learning. An algorithm needs as much data as possible to understand exactly what it is supposed to be doing. As a model takes in more data, it improves. For FathomNet and the Portal which rely on machine learning to work effectively to identify marine life, they need high-quality, reliable data—a lot of it. MBARI seeded FathomNet with nearly 100,000 labeled images collected by a variety of research teams to help train its models—but even at that volume, those images are limited to the scope of research MBARI scientists have done and locations they’ve visited; a good AI needs more.

“We have to move beyond manual annotation so that we can scale our capacity to process this information,” Katija says, citing the hundreds of thousands of hours of existing footage captured by ROVs, autonomous underwater vehicles, and other underwater cameras around the world beyond what just MBARI has collected—plus new footage being captured all the time.

Cozy sci-non-fi

Enter &ranj Serious Games—a Netherlands-based game development studio focused on positive behavioral change through play—and Internet of Elephants—a nature tech enterprise based in Kenya focused on rekindling relationships between people and wildlife.

Drawing inspiration from other community science apps such as iNaturalist and eBird, which leverage nature enthusiasts to identify images of animals and plants around the world, the Ocean Vision AI team worked with &ranj and Gautam Shah of Internet of Elephants to develop FathomVerse. In this new mobile game, players interact with real images collected by researchers, identifying marine life as they explore and learn. Their gameplay, in turn, helps improve the artificial intelligence used in FathomNet and the Portal.

Getting people on board with the meticulous, often repetitive, task of labeling thousands of underwater images, however, is easier said than done. Over 18 months, the ambitious team tackled the challenge of creating an engaging and rewarding gameplay experience, while maintaining the scientific quality and integrity needed to get viable data out of the game that can go on to train machine learning algorithms.

After testing an initial beta version of the game, a core group of the MBARI team, Gautam Shah, and I traveled to Rotterdam to meet in person with &ranj at their headquarters in an intensive workshop to decide what version one of the game would include—drafting grand plans and tearfully sacrificing others, staying up until 2 a.m. in the sweltering belly of a converted river-boat-turned-house-rental, myriad sticky notes strewn about, trading puns and song parodies to keep each other sane. Those plans, and some of the puns, ultimately are what’s in the game today.

Related videos

FathomVerse official trailer

Described as cozy sci-non-fi, FathomVerse progresses at the pace set by its user. Motivated players can choose to power through minigames, learning to identify nearly 50 groups of ocean animals and racking up points and awards. Alternatively, casual gamers can accomplish tasks at a more relaxed pace—saving favorite images and curating a personal gallery while listening to ambient lofi music. “It’s designed to be easy to pick up. You can enjoy it with your morning coffee or while waiting for the bus,” says Ocean Vision AI Engagement Coordinator Lilli Carlsen.

Because the game is tied to Ocean Vision AI’s other platforms, FathomVerse players can be among the first to view new imagery collected by researchers exploring the ocean. As more animals and images are identified and confirmed by the FathomVerse community, the AI researchers use will learn and change.

“The good kind of AI”

As people reckon with the role AI might come to play in our lives, FathomVerse’s reception by early beta testers recruited from the Monterey Bay Aquarium’s Instagram, Tumblr, and Discord, and MBARI has been encouraging—its beta launch in 2023 drew nearly 1,400 players from 65 countries.

As one Tumblr user @littlehidingowl put it, “It’s the good kind of AI!!!” And another user @inner-space-oddity, “Now THIS is the kind of AI I can support.”

Involving the public in the process of creating and training AI has helped foster a sense of trust—both in science and machine learning—that’s vital to helping us better understand our world in the future.

An anonymous user on Discord says, “It’s exciting to know that what I’m doing while playing a silly little game can make a difference. Everything else in the world can be scary, but maybe, in this one small way, I can help.”

FathomVerse allows anyone with a smartphone or tablet to take part in ocean exploration and discovery.

© 2024 Lilli Carlsen/MBARI

Ocean exploration, for all

The decision by the FathomVerse team to create a mobile game specifically was also intentional. Ocean exploration is already expensive enough—Katija affirms, “Our team wanted to design ways to tap into widespread enthusiasm for ocean animals while at the same time inviting a broader community of people to take part in ocean exploration and discovery.”

By using the technology most people already have in their pockets or at home as a tool for science, FathomVerse hopes to leverage thousands, if not tens of thousands, of people globally to help us understand our ocean; removing the cost-prohibitive barrier of needing a specific gaming console or even a computer to play, increases the public’s accessibility to participate in cutting-edge community science. Suddenly, all anyone needs to be a deep-sea researcher is a phone or a tablet.

And the more ocean researchers and enthusiasts there are, the better.

Katija sums it up best: “With a triple threat of climate change, pollution, and overfishing, it’s more urgent than ever that we understand our changing ocean. We need all hands on deck to study the ocean at this critical crossroads.”

FathomVerse is now available to download on the Google Play Store and Apple App Store.

Follow MBARI’s discoveries

MBARI (Monterey Bay Aquarium Research Institute) asks and answers questions about the deep sea in our backyard—and beyond.

Keep exploring

Story

Chumash Heritage National Marine Sanctuary is almost here

Chumash Heritage National Marine Sanctuary protects marine life and cultural resources on California’s coast.

Read story – Chumash Heritage National Marine Sanctuary is almost here